Here’s the thing. I started poking around Solana explorers because I wanted clearer NFT provenance. They gave me quick answers about transfers and mint history. Initially I thought all explorers were roughly the same, but then I dug into indexing differences and UI ergonomics which changed how I judged data reliability. My instinct said speed mattered most, though actually depth of historical indexing and the ability to trace multi-hop transfers matter far more when provenance is on the line.

Whoa! Tracking NFTs feels a lot like detective work sometimes. I follow signatures, program accounts, and memos across multiple txs. It gets messy when wallets obfuscate routes or batch transfers to mixers. On one hand the UI can make patterns obvious, though on the other hand poor labeling will bury them—so you need to be skeptical and curious at once.

Here’s the thing. I learned to watch for token balance deltas and program logs. Those two clues reveal a lot about intent and timing. Sometimes a wallet shows a sudden spike and then nothing, which is a red flag for wash trading or quick flips. My gut told me somethin’ was off when I saw the same minting authority appearing in unrelated collections.

Really? I know, it sounds dramatic. But the truth is that good explorers give you both raw transaction hex and a friendly timeline. You can export the data or just eyeball patterns. The ability to see NFT owners change over time simplifies provenance checks and valuation assessments, and that advantage compounds when you’re analyzing marketplaces or on-chain royalties.

Here’s the thing. Not every explorer indexes internal program events the same way. I used to assume logs were universal, but different indexing rules mean some events are missing or summarized. That led me down a rabbit hole of cross-referencing data and building a small checklist to validate findings. In practice that checklist saves time and stops me from assuming a wallet is clean or shady without proof.

Whoa! Sometimes I get excited about a single feature. Recently it was advanced token filters. They let me isolate mints by date range and by mint authority in seconds. That cut hours of manual hunting into minutes. On the flip side, when filters are missing, you end up downloading CSVs and doing spreadsheet spelunking, which is soul-sucking.

Here’s the thing. I keep a simple mental model: speed, completeness, and usability. Speed gets you answers fast. Completeness gives you confidence. Usability prevents mistakes. At first I ranked speed as number one, but after tracking complex provenance cases I re-ordered those priorities because missing data ruins conclusions. Actually, wait—let me rephrase that: missing data is worse than slightly slower queries.

Hmm… I should confess, I’m biased toward explorers that show program-level transfers. Seeing CPI calls and program-derived account changes helps me reconstruct how a token moved. It’s a subtle detail, but it matters. On a few occasions that detail revealed a marketplace-side swap that never hit the public order book, which explains weird price moves.

Here’s the thing. The best explorers combine transaction details with analytics dashboards so you can shift perspectives. You start with a wallet, then jump to collection heatmaps, and then zoom into mint timelines. That flow mimics how my brain investigates: macro glance, then micro forensic step. My process is not perfect, but it’s fast and repeatable, which is crucial when you monitor many collections.

Seriously? Yes. There are explorers that surface NFT metadata evolution—like updates to off-chain URIs or royalty recipients—and that is gold. You can see when an artwork’s metadata was changed post-mint, which sometimes correlates to disputes or token burns. Those breadcrumbs are essential for risk assessment and for telling a story to prospective buyers or partners.

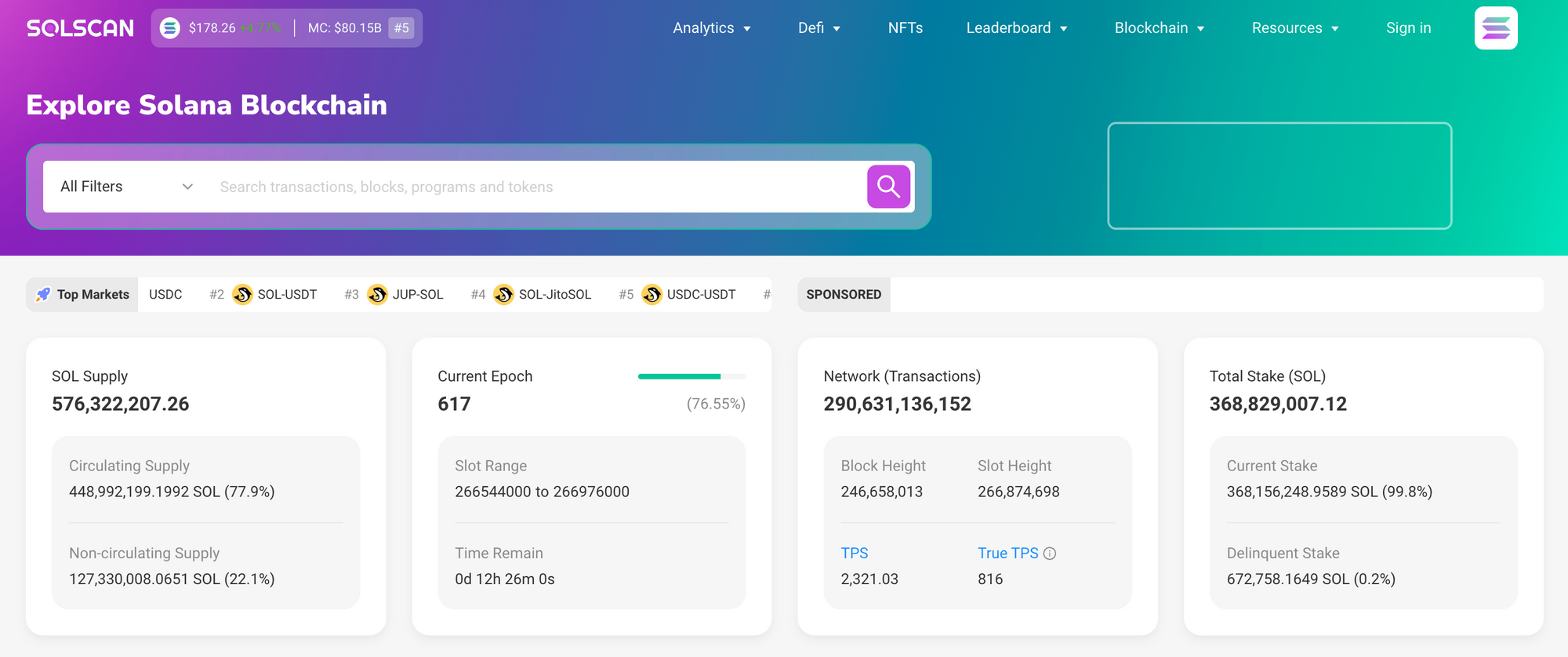

Here’s the thing. I use solscan for a lot of that initial triage and then cross-check with other tooling when I need more advanced analytics. The interface is intuitive and it surfaces logs and token movement clearly, and the explorer’s search ergonomics let me pivot fast between addresses, signatures, and programs. My instinct said check it first, and that habit stuck—it’s become part of my daily workflow.

Whoa! Little annoyances bug me though. Search fuzzy matches can be finicky, and sometimes page loads hiccup under heavy traffic. It’s not game over, but those moments slow you down if you’re racing to verify a transaction during a live mint or a flash sale. Also, small quirks like inconsistent labeling between token accounts can be confusing—very very important to watch out for.

Here’s the thing. When you track NFTs you learn to triangulate. A single explorer gives a view, but cross-referencing seals the case. I will often snapshot on-chain evidence, copy raw logs, and annotate a timeline. On one occasion that practice helped me prove a provenance claim when a collector disputed an apparent transfer—proof matters and screenshots with tx hashes win conversations.

Whoa! Okay, so check this out—analytics matter beyond just visuals. Aggregate stats like holder concentration, recent transfer velocity, and average time-to-sale feed into valuation models. When a collection has a handful of whales controlling most supply, that changes your risk calculus. Seeing those distributions on a single pane informs better strategy, whether you’re flipping, holding, or building tools on top.

Here’s the thing. Beyond UI, API access is where explorers become foundations for automation. I built small scripts that poll endpoints for new mints and alert me when suspicious patterns emerge. Initially I thought polling on-chain directly would be necessary, but reliable explorer APIs abstract complexities and save development time. That said, rate limits and data freshness are real constraints.

Hmm… I should add a practical tip. Always validate API timestamps against confirmed slot timestamps. Explorer caches sometimes show slightly stale info, and that small offset can mislead time-sensitive bots. My mistake once was reacting to a cached state and losing a bid in milliseconds—lesson learned. I’m not 100% sure about the exact delta every time, but I now include a double-check step in my pipeline.

Here’s the thing. Forensic tracing often requires following multi-hop transfers across program accounts, and that can hide behind token wrapping or surrogate transfers. Good explorers expose program logs and CPI chains so you can see the call graph. When that’s visible you can reconstruct a movement chain end-to-end, and that capability is why I trust some platforms more than others.

Seriously? Yup. Also, watch out for presentation traps. Some dashboards aggregate transfers into high-level summaries which lose nuance. If you rely only on summaries you might miss laundering patterns or fee-exempt transfers. On one case I almost missed an internal transfer sequence because the summary omitted zero-value program calls that were actually meaningful.

Here’s the thing. Community features and annotations make a big difference. When other users annotate suspicious wallets or flag airdrops, that crowdsourced intelligence saves detective time. But, be careful—crowdsourced flags can be noisy and sometimes wrong. My instinct says treat them as signals, not facts, and then chase the on-chain evidence yourself.

Whoa! I’m partial to explorers that embrace exportability. CSV exports, raw JSON endpoints, and shareable permalinks let you collaborate or escalate findings. When I assemble a report for a client or a partner, those exports make the narrative convincing. Also, permalinks help when you need to reference a transaction in a legal or arbitration context.

Here’s the thing. You should use explorers as tools, not gospel. They surface data, but interpretation is your job. Initially I trusted an early read, then realized a program update changed how certain transfers were logged—so context matters. On one hand the data is immutable, though actually how it’s presented can vary with upgrades and indexing quirks, so stay skeptical.

Hmm… I tend to prefer explorers with clear changelogs and transparent indexing docs. When the provider explains how they index inner instructions, or how they parse metadata updates, I can better trust their outputs. Lack of documentation is a red flag for me because then you can’t tell whether an absence of evidence is a real absence or just an indexing gap.

Here’s the thing. If you care about NFTs on Solana, learn to read program IDs and token account patterns. Those identifiers tell stories. They reveal mint authorities, creators, and sometimes fee paths. I teach new analysts to spot these quickly because it speeds up triage—small investments in pattern recognition yield large time savings during investigations.

Whoa! Also, be ready for edge cases. Burned tokens, wrapped assets, and cross-program swaps complicate trails. One time a mint authority rotation created confusion until I checked the program logs. Those small complications are the norm, not the exception, and they reward curiosity and patience.

Here’s the thing. I still make mistakes. I once misattributed an ownership transfer because two wallets used similar nicknames and I didn’t verify the signature hash. I’m human, sometimes I repeat steps, somethin’ slips. But processes and checklists reduce those errors and make findings defensible when you present them to others.

Seriously? Yes—final thought: explorers are essential, but they are best used in a toolkit. Combine fast triage tools, deep forensic indices, and automated alerts for a robust workflow. If you want a place to start, I often check solscan first for clarity and then push into deeper analytics when needed.

Quick Practical Notes

Here’s the thing. When you evaluate an explorer look for raw logs, export options, and program-level visibility. Check indexing docs and API reliability. Use annotations carefully and always cross-verify suspicious claims with raw tx data. Keep automated checks but don’t let them replace manual spot-checks…

FAQ

Which explorer should I use for NFT provenance?

Start with explorers that expose inner instructions and metadata changes, and then cross-check with APIs for automation; I often start with solscan and move to specialized analytics if needed.

How do I avoid false positives when flagging suspicious wallets?

Triangulate evidence: check token deltas, program logs, and ownership timelines. Treat community flags as leads, not verdicts. Keep a checklist and export raw txs for records.